In This Story

Humans are remarkably dexterous; we can fumble around in a pocket and find our house key, we can wash delicate glassware without breaking it, and somehow hang laundry while balancing an armload of it. But getting a robot to complete any of those tasks, naturally and without breaking or dropping things, is far more challenging. What robots are great at are high-accuracy, repetitive pick-and-place tasks and non-contact tasks like spray painting and welding – for these tasks, robots are often much faster and more accurate than humans.

One approach to providing human-level dexterity for robots is to collect data from human performance of a task, learn a policy from that data that has both the perception and action required to do the task, and then execute that policy on a robot. This approach requires translating human behavior into something a robot can learn and replicate in new environments.

For decades, roboticists attempted this translation through analytical decomposition, task and motion planning frameworks that hand-engineered grasp primitives, force strategies, and symbolic task representations (Lozano-Pérez et al., 1989; Mason, 1981; Cutkosky, 1989). While these methods produced elegant solutions for specific problems, they could not scale to the diversity of unstructured, real-world manipulation.

End-to-end learning methods have shown that robots can acquire new skills directly from data, bypassing the need to hand-engineer every behavior. Vision-language-action (VLA) models can now interpret a scene, understand a task instruction, and produce motor commands from a single learned policy – as seen in recent demonstrations of constructing boxes and folding clothing by Generalist AI, Physical Intelligence, Sunday Robotics, and Figure.

However, multi-step, dynamic, force-mediated tasks remain well beyond what today’s robots can reliably accomplish with VLAs. People routinely perform tasks such as cooking dinner, cleaning the entire house, or changing out a light switch, drawing on a lifetime of experience. The dexterity required for a robotic arm to learn to reliably perform these tasks is still to be developed.

Robotic Data Collection Methods

Robot learning relies on a variety of data, and the choice of data source determines what kinds of tasks a policy can ultimately perform. Each method makes different trade-offs between scale, fidelity, and the kinds of physical signals it can capture. The four main data sources the field draws on today are internet-scale data, simulation, egocentric video, teleoperation, and handheld collection. At RAI, we are exploring three of these techniques: simulation, teleoperation, and handheld grippers.

Internet-scale data

Internet-scale video and image data provide rich visual and semantic representations of the world. Large vision-language models (VLMs) trained on this data can recognize objects, understand spatial relationships, and reason about the pre- and post-conditions of tasks. These representations increasingly serve as the perceptual backbone for robot policies. Audio data is similarly abundant online, providing a foundation for pre-training. But force data, tactile data, and synchronized binocular vision at the scale required for training manipulation policies are missing from these training sets.

Simulation data

Simulation supports two distinct uses in robot learning: generating synthetic sensor data (rendered camera views from physical camera locations, depth maps, contact signals) for training perception and end-to-end visuomotor policies, and parallelizing behavior learning, where thousands of virtual robots train simultaneously through reinforcement learning. Both benefit from the ease of generating simulated data at scale. Simulation is most successful for pre-training base models that are then fine-tuned on task-specific simulation data or data from sources that are guaranteed to match the robot and task closely. The open challenge is that simulated physics does not always capture the complexity of real-world interactions, particularly with flexible objects or rapidly changing contacts. Closing this sim-to-real gap remains an active research area.

Egocentric video collection

Egocentric video collection, where cameras are mounted on a person’s head or glasses with tracking of hand configurations and wrist positions, offers a way to collect data in the wild at low cost. The person is unencumbered and can perform tasks at their natural cadence. But video-based tracking of hands has serious problems with occlusion and accuracy limitations, particularly for tasks involving manipulating small objects that are easily occluded by the hand. Data of the human hand must be retargeted onto the robot hand, which generally has fewer and different degrees of freedom. Egocentric video also cannot capture force data, and any sensory information used by the human that is not captured in the video feed is lost.

Teleoperation

Real-world teleoperation puts a physical robot in the task environment, controlled remotely by a human operator using VR interfaces or kinematic translation devices. This approach generates real, robot-aligned physical data: if the human operator can do the task using the tele-operation interface, an appropriate control policy should enable the robot to complete it too. Some teleoperation setups remove the operator from the task workspace, viewing the workspace at a distance and without tactile feedback. The resulting loss of subtle force and contact cues slows the human operator, and that slower cadence is inherited by the policy learned from their demonstrations. To recover this missing signal, you must either add haptic feedback to the teleoperation rig or include force information captured by other methods in the training data.

Handheld data collection

Handheld data collection has the human perform the task directly while operating a purpose-built gripper that mimics a robot hand. The operator receives proprioceptive feedback through the mechanism as they manipulate real objects, and force sensors in the gripper log that interaction for training. The setup is intuitive enough that, after brief practice, operators perform many tasks at near-natural cadence. The tradeoffs are an embodiment gap between a human arm holding a gripper and a robot arm holding the same gripper, and reduced dexterity compared to bare-handed manipulation. Within those limits, handheld collection preserves both human cadence and force feedback in a way that other collection methods cannot.

Our position is that the most promising path to robotic dexterity for contact-rich manipulation runs through handheld data collection with co-designed grippers, where the device a human operates and the gripper a robot executes with are kinematically and sensorially identical.

Advancing Dexterous Manipulation through Handheld Data Collection

One advantage of handheld data collection is that the natural dexterity of the human operator still comes through in the performance, because the operator is not so encumbered as to become clumsy. There is no embodiment gap between the robot hand and the handheld gripper as they are kinematically matched. And the sensor signals required for good performance, including force, can be recorded from the handheld gripper using sensors that match the characteristics of those in the robot hand.

Force is particularly important for manufacturing, assembly, and disassembly because these tasks are tight-tolerance and contact-rich. Consider the process of loosening a stuck bolt. Until the bolt breaks free and starts to turn, there is increasing torque being applied, but no visible motion, and hence a purely vision-based policy will just position the wrench on the bolt but not apply the necessary torque to break it free.

Vision also faces a more fundamental limit for force-mediated tasks. Even at the resolutions current vision transformers operate at, and even as those resolutions continue to climb, there are physical states that are visually indistinguishable. A cupped hand gently resting on a bottle cap and one squeezing it with significant force can produce nearly identical images. Higher resolution helps, but the gap between “pressing” and “resting” is not primarily a resolution problem. It is a modality problem.

Additionally, handheld grippers with onboard sensors and cameras are easy to manufacture and put in the hands of operators – no robot or workspace needed. This flexibility allows the creation of a diverse dataset of environments, conditions and strategies for completing the task. Since all machine learning is based on interpolation, we need enough data covering the entire range of physical interactions to allow models to generalize effectively. This means models must be trained outside of controlled lab environments and in more unstructured, real-world settings.

Co-Designing Grippers for Data Collection and Robot Execution

When data is collected through human demonstration, the target user of a robot hand is no longer just the robot system and a roboticist behind a screen. The designer must now consider a second user – the operator.

Early experiments that retrofitted existing robot grippers for handheld use by adding tracking cameras and encoders to off-the-shelf end effectors quickly revealed ergonomic problems that degraded data quality. These experiences determined that data collection devices and robot end effectors need to be developed in unison.

We co-designed both the handheld interface for demonstration and the actuated gripper for robotic execution as a single unified platform. The linkages, the finger geometry, the kinematics: these are shared between both variants. What the camera sees during human demonstration is geometrically identical to what it sees during robot execution. The forces the human applies through the mechanism map directly to the forces the robot’s actuators can produce and the sensors show what signals to expect during a successful task completion.

This match matters for policy transfer. A robot policy is, at its core, a function that maps observations to actions. The simplest way for that mapping to be reliable is to have consistency between the observations during training and deployment. A well-conditioned policy requires that the current observation unambiguously determine the correct next action. If the geometry changes between demonstration and execution, if the camera viewpoint shifts, or if force profiles drift between the two configurations, the policy encounters states it has never seen and fails.

Co-design eliminates an entire class of these transfer failures. The human and the robot operate the same mechanism, in the same configuration, with the same sensor suite. Additionally, co-design is meant to leverage human ability and intuition in this training approach. If the device is too cumbersome or complicated to use, operator performance degrades. Ergonomics is a data quality issue–if the operator is fatigued or uncomfortable, the resulting policy will suffer.

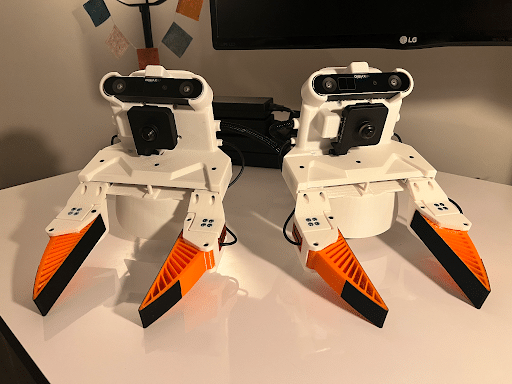

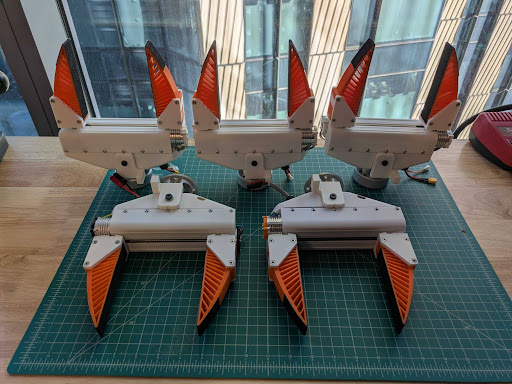

The Evolution of Co-Designing Grippers

Our first motorized gripper equivalent to a capture device was built off of the UMI platform (Chi et al., 2024), which demonstrated that handheld data collection could produce effective robot policies using only position data. We improved on the original handheld device by making the mechanism more robust, replacing rack-and-pinion elements with linkages to eliminate backlash, adding magnetic position encoders and force sensors, and refining the tracking camera placement. Pointing the tracking camera upward allowed us to maintain tracking across more diverse environments as distractor objects moved through the scene.

A parallel-jaw gripper can accomplish a surprising range of tasks, but there is a limit to what tasks can be done with a single degree of freedom, particularly for tool use and in-hand manipulation. Even practiced operators found it difficult to wield a ratchet, turn a screwdriver, or hold a nail steady while hammering it with the other hand. Given this experience, our next co-design targeted force-mediated assembly and tool-use tasks, prioritizing power grasps, pinch grasps, and the ability to wield human tools.

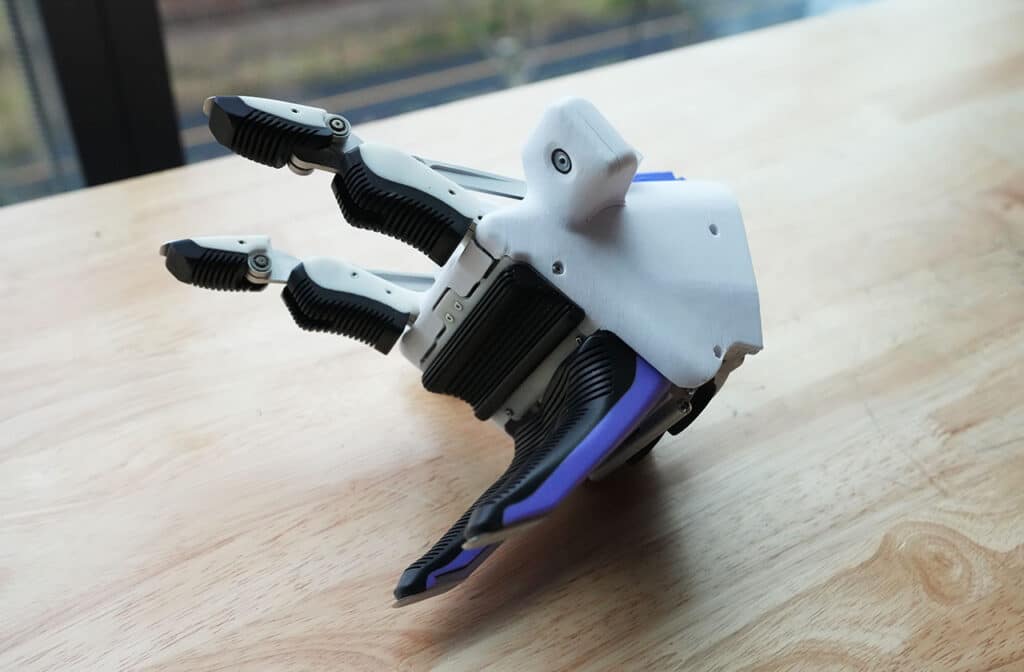

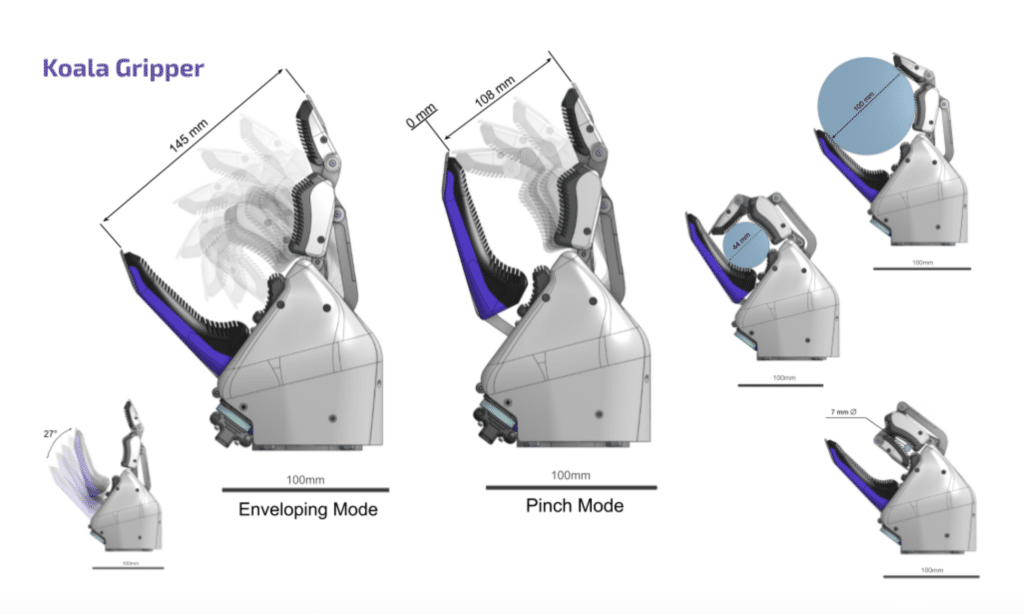

The Koala Platform

The Koala platform, named after the double-thumbed marsupial, was designed to address this class of tasks. Koala comprises two variants: a handheld, hand-powered gripper for human data collection and an electrically actuated gripper for robotic execution. The design methodology used here was a true co-design, where requirements took both the user and the robotic gripper performance into account. The design incorporates a pivoting monolithic double thumb and two underactuated linkage-based fingers, resulting in three controllable degrees of freedom and two that are underactuated. Combined with contoured and deformable pad surfaces, this design enables a wide range of grasps: paper thin precision pinches (similar to parallel jaw grippers), power grasps on objects as small as a pencil and as large as a two-liter soda bottle, and caging grasps on irregular objects.

The overall morphology of the gripper was designed by evaluating the range of grasp primitives achievable by the human hand (Feix et al., 2016; Cutkosky, 1989). The goal of the device was not to replicate every human grasp type, but rather to achieve the functional outcome of each grasp while minimizing the active degrees of freedom and maintaining success across the full range of target tasks.

For many common grasps, picking up a tool by wrapping all fingers around it, for example, the Koala gripper operates much as a human hand would, using a power grasp driven by a single trigger. Where human and gripper morphology diverge, the operator adapts: a lateral pinch (holding a key between thumb and the side of the index finger) becomes a power pinch between the double thumb and fingers, achieving the same functional result with a single degree of freedom. We prioritized minimizing the number of triggers the operator interfaces with, keeping the device intuitive. By coupling each trigger motion to a corresponding finger motion, the gripper leverages the operator’s natural reflexes, and the learning curve is short.

While beneficial for reducing the embodiment gap, the co-design constraint imposed real engineering challenges. The handheld variant needed to accommodate the full stroke and force of a human hand without breaking, while keeping the force ratio under 3:1 so that the device remains comfortable for extended collection sessions. The actuated variant replaces the human-operated linkage with a custom ballscrew-driven motor but shares the same finger geometry and sensor suite. The operator can squeeze as hard as they want, and that force will be transferred into the environment.

Because we are limiting the degrees of freedom of the operator by coupling their hand to a rigid system, we needed to prioritize ergonomics to accommodate the wide range of human hands, so three sizes of handheld device were designed and prototyped. These sizes accommodate a wide range of hands comfortably, allowing operators to collect data over extended periods.

Our data collection system integrates the Koala handheld gripper with synchronized multimodal sensing. RGB imagery is captured in HD from wide-angle cameras mounted on the gripper, providing an egocentric view of the manipulation workspace. Gripper state, including width, velocity, and torque, is recorded through motor encoders at 100 Hz.

Why Richer Observations Matter Even More Without Memory

Today’s robot policies are largely reactive. They observe the current moment and act without drawing on what happened seconds ago. This constraint is fundamental to how the policy was learned – for a policy to select the correct action, its current observation must contain enough information to fully determine the task state. Without memory, no two meaningfully different states can produce the same sensor readings, or the policy cannot distinguish between them and fails. Force and proprioceptive data directly address this problem. They resolve ambiguities that vision alone cannot, making the instantaneous observation rich enough to act on even when the policy has no recollection of what came before. Architectures that incorporate memory and temporal context would significantly relax this requirement, and remain an active area of research that we will discuss later in this series.

Long-Term Vision

Over the next year, our data collection initiative has an aggressive roadmap. The immediate focus is on demonstrating force-mediated manipulation through a battery installation task where a bracket is seated on a battery frame, nuts are tightened to secure the cells, connectors are mated, and the assembly is verified, a multi-step sequence requiring precise force control throughout.

The longer-term vision centers around standalone capture devices that can leave the lab and collect data in environments we cannot easily build indoors. For example, we want to be able to take a Koala device to a beach to pick up litter. These are tasks where the data collection itself does some good in the world, and they exercise the kinds of variation that laboratory settings cannot reproduce: irregular terrain, weather, novel objects, and the full distribution of lighting and clutter that real environments contain.

Collecting data is only the beginning. The question of how to combine heterogeneous data sources (simulation, teleoperation, handheld demonstration, and internet-scale pretraining) into a coherent training recipe is where many of the interesting research questions lie. Each source of data makes different assumptions about action spaces and sensor availability. Combining them naively introduces conflicts between these assumptions that degrade policy performance.

In the next post, we will explore how simulation complements the real-world data described here – generating synthetic training data at scale and closing the gap between virtual and physical environments.